When looking for a new monitor to buy, we primarily focus on factors like refresh rate, resolution, screen size, display type, and a few other additional features. But many of us forget to take another crucial characteristic into account – color depth. If your monitor’s color depth is not right, then no matter how good the graphics of your game is, you cannot enjoy it to its fullest.

Many brands offer budget monitors with a minimum of 8-bit color depth. However, monitors with 10-bit or higher color depth are more popular among gamers. But why is a mere 20% difference so significant?

The main difference between 10-bit color vs 8-bit color is – “Their ability to show colors and variants. 8-bit monitors reach 256 colors per channel, displaying 16.7 million colors. Conversely, 10-bit monitors reach 1024 colors per channel, displaying 1.07 billion colors. Therefore, 10-bit shows a much better and smoother transition of color than 8-bit because it has a higher range.”

Now that you know the basic difference between 10-bit color and 8-bit color, let’s dig deeper to understand color depth a bit better. First, we will discuss what color depth actually is.

Key Takeaways

- 10-bit color depth displays over 1 billion colors vs. 16 million on 8-bit, allowing for smoother gradients and transitions.

- 10-bit enables full use of HDR, wide color gamuts, and high resolution like 4K/8K. 8-bit is limited to sRGB.

- For gaming, 10-bit is necessary for HDR and vivid, accurate colors. 8-bit can band and look dull.

- 10-bit shows movies and videos with complete artistic details. 8-bit may lose subtle gradations.

- For creative work, 10-bit is required to edit and view high bit-depth source material properly.

- Overall, 10-bit offers a more realistic, nuanced, and higher quality viewing experience compared to 8-bit.

- 10-bit color depth has become the new standard for modern displays, especially for gaming and video.

What is Color Depth?

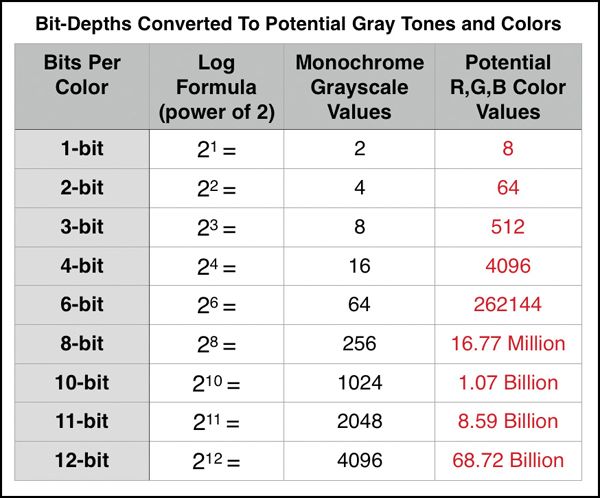

Simply put, color depth measures the number of colors a pixel in an image can display. The higher your monitor’s color depth, the more color each pixel is able to show. The color depth scale ranges from 1-bit to 48-bit.

The rising popularity of highly graphical levels has increased the demand for monitors with high color depth ratings. It is also an important factor to consider if you want to watch movies or shows with exceptional video quality, such as 4k or 8k. A higher color depth rating will show more colors, transitions, and subtle tones more accurately. In the case of gaming, you will miss out on a more immersive experience using a display with a lower color depth.

The Differences between 10bit and 8bit Color

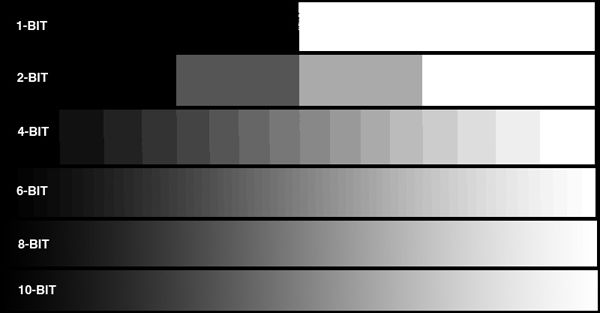

As we have already mentioned, the primary difference between 10-bit and 8-bit color depth is how many colors each pixel displays. This difference translates into how shades and transitions are presented.

However, to help you understand how this works and why it is especially important for gamers, we have broken down our explanation into three sections – the math, the history, and the display quality.

The Math

The color depth math is very simple to understand if you focus for a few minutes. As we have discussed before, each pixel represents a number of colors. A zero value is for no color, and one value is for each primary color from RGB.

Now, a display panel with 8-bit color depth has 28 or 256 values per color. Each of these values represents a unique tone, shade, or gradation of a primary color. Therefore, we have a total of (256 x 256 x 256) or 16.7 million colors for three primary colors.

Now, let’s do the math for 10-bit panels. Again, each primary color has 210 or 1024 unique gradations. So for three primary colors, a 10-bit will display a total of (1024 x 1024 x 1024) or 1.07 billion colors.

Therefore, you can see a better and smoother transition in images.

The History

Don’t worry; we will not go on and on about the whole history of color depth technology. We will just mention a snippet of history, so you understand why the difference is significant for gamers.

Initially, when 8-bit color depth was being developed, it was intended to be used with VGA displays. It can only go up to the RGB color gamut. Color gamut refers to the range of colors a device can record or produce. It is three-dimensional in the sense that it is described using brightness, hue, and saturation values.

Most 8-bit monitors are not capable of displaying a wide range or space of colors, for instance, DCI-P3 or Adobe RGB. Moreover, due to the color gamut limit, 8-bit monitors cannot correctly display HDR elements, which are very common in modern games such as Ghost of Tsushima, Monster Hunter World, and Death Stranding. You will need a minimum of 10-bit to work with wider color spaces and HDR.

The Display Quality

Color depth determines how accurately an element is displayed on your monitor. The visual elements, videos, and images are loaded with more metadata these days, which include high dynamic range (HDR), Dots per Inch (DPI), focal depth, etc. The higher the color depth value, the more information will be integrated while displaying the element. Therefore, you will get a better display quality.

Panels with 8-bit color depth often display adequate quality realistic images. But when it comes to modern input sources, they do the bare minimum. Today’s ultra HD 4K and 8K content require at least 10-bit color depth for a realistic and accurate display. With an 8-bit monitor, you will miss out on the incredible details of such content because it eliminates or compresses color gradations and details while displaying them.

Now, for general office work or basic daily use, this difference may not bother anyone. However, for movie and show enthusiasts, gamers, photographers, and video professionals, the color depth difference has a great impact. The poor display quality of an 8-bit monitor, not seeing enough color gradations, and a more washed-out view may not be acceptable to many of them.

Why Choose 10-bit Color Depth?

10-bit color depth has become the new standard because it offers many benefits compared to 8-bit color. Here are some of the key advantages of using a 10-bit display:

- Displays over 1 billion colors, 4x more than 8-bit – this enables remarkably smooth color gradients and transitions.

- Works seamlessly with HDR, wide gamuts like DCI-P3, and high resolution content like 4K/8K.

- Offers superior color accuracy essential for color-critical work in design, photography, and video editing.

- Enhances gaming visuals with full HDR support, vivid colors, and reduced banding artifacts.

- Shows movies and videos with all subtleties and artistic details intact.

- Future-proof for inevitable rise of HDR and higher resolution media.

- More realistic, nuanced, and lifelike image quality.

With these significant benefits, 10-bit color provides a major upgrade in display quality and viewing experience compared to 8-bit.

10bit color vs 8bit Color for Gaming

Unless you mostly play classic games and are okay with compromising on graphical fidelity, 8-bit monitors will be good enough for you. However, if you want to enjoy the phenomenal level of detail and immersive experience of playing at 4K HDR quality that modern games offer, you will need a 10-bit monitor.

Furthermore, the majority of today’s games require a minimum of 10-bit color depth for rendering. Unlike 8-bit monitors, 10-bit monitors can properly work with Dolby Vision and HDR10+. If you force such high-quality visual content to run on an 8-bit monitor, you will get a duller view where the bands will be annoyingly noticeable. Therefore, 10-bit offers a more reliable display quality for gamers.

10bit vs 8bit Color for Watching Movies

For enthusiastic movie watchers, the source content quality is extremely important. If you are one of them, a 10-bit monitor will serve your purpose better. When you watch content with all the visual details and in the quality the creators intended, you get to experience the full vision of the film. However, if you are more of a laid-back viewer who does not mind missing out on that level of experience, an 8-bit monitor will work decently for you.

10bit vs 8bit Color for Designers, Video Editors, and Photographers

Graphics designers, video editors, and photographers must work with the highest display quality possible. The higher color depth of a 10-bit monitor allows illustrators, animators, and graphics designers to see and work with a broader range of colors, producing better-quality output. In contrast, an 8-bit monitor will limit their scope of creativity and will not show enough realistic visual elements.

For photographers, videographers, and video editors, the 10-bit color depth is essential. Most modern cameras, especially DSLR cameras, shoot in 10-bit to 12-bit. An 8-bit monitor will not show you the real version of what you have captured. Moreover, when you need to post-process or edit a video or image, you cannot produce the best output without seeing the source quality and details.

10bit vs 8bit Color: Which One to Pick?

Now that you understand the differences between 10-bit and 8-bit color depth, you can decide which one is for you based on your needs.

“Whether you are a gamer, movie buff, video editor, graphics designer, or photographer, if you want to experience visual content to the fullest, the 10-bit color depth is for you. However, if you do not mind the difference in display quality or have a tight budget, then 8-bit color depth will be adequate.“

But eventually, with the inevitable rise of ultra HD 4K and 8K, it is wise to move towards 10-bit or a higher color depth.

Frequently Asked Questions

Q: Is 10-bit color backwards compatible with 8-bit?

A: Yes, 10-bit monitors can display 8-bit color without issues. The extra gradations of 10-bit will be downsampled to 8-bit if needed.

Q: Do I need special cables for 10-bit?

A: For the full 10-bit experience, DisplayPort 1.4 or HDMI 2.0 cables are recommended. Older cables may be limited to 8-bit.

Q: Is 10-bit only useful for HDR content?

A: No, 10-bit improves SDR content as well by reducing banding artifacts and enabling a wider color gamut.

Q: Is 10-bit color necessary for 1440p or 1080p gaming?

A: At lower resolutions like 1440p or 1080p, the benefits of 10-bit are less pronounced but can still improve quality.

Q: Does Windows 10 support 10-bit properly?

A: Yes, Windows 10 fully supports 10-bit color with proper graphics drivers installed. Enable 10-bit mode in display settings.

Q: Is 10-bit color only beneficial on large screens?

A: Not necessarily – the advantages of 10-bit color depth apply to smaller monitors too. However, they are most noticeable on larger screens.

Q: Are 10-bit monitors more expensive?

A: Generally yes, 10-bit monitors command a premium over 8-bit monitors with similar specs. The price difference can range from $50 to $300+ depending on the display.

Nafiul Haque, having grown up on major gaming platforms, began his journey as a journalist covering gaming news, reviews, and leaks. His passion evolved into a deep interest in gaming hardware. Now, he writes about everything from gaming laptop reviews to comparing the latest GPUs and consoles.