The GPT series of language models has been a major breakthrough in the field of natural language processing, with each iteration improving on the previous one. The latest model in the series, ChatGPT-3, has set a new standard for AI-generated human-like responses to user queries. OpenAI may release its newest large language model, GPT-4. And, many are eagerly anticipating the release of ChatGPT-4, hoping for even more impressive advancements.

OpenAI, the creator of well-known language models ChatGPT and DallE, has been working on developing this next-generation model. According to Microsoft Germany’s CTO, Andreas Braun, in a conversation with German news site Heise, “We will introduce GPT-4 next week… we will have multimodal models that will offer completely different possibilities—for example, videos.” With a multimodal language model, GPT-4 could potentially gather information from various sources, which means that its latest advancements could enable it to respond to users’ inquiries in the form of images, music, and videos.

What do we know so far about GPT-4?

Apart from GPT-4’s multimodal abilities, it could also be successful in solving ChatGPT’s problem of responding slowly to user-generated queries. The next-generation language model is expected to give out answers much more quickly and in a more human-like manner.

Reportedly, OpenAI could also be working on a mobile app powered by GPT-4. Notably, ChatGPT is a web-based language model and does not have a mobile app yet.

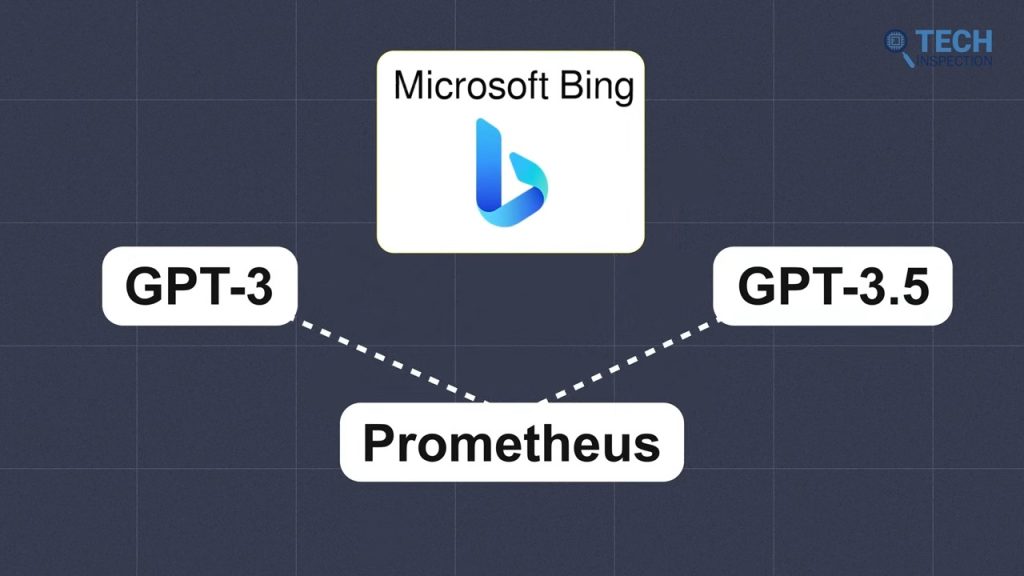

While both Microsoft and OpenAI are tight-lipped about integrating GPT-4 into Bing search (possibly due to the recent controversies surrounding the search assistant), it is highly likely that GPT-4 will be used in Bing chat.

While ChatGPT is built on top of GPT-3.5, Microsoft’s Bing search uses GPT-3 and GPT-3.5 along with proprietary tech called Prometheus to churn out answers quickly while making use of real-time information.

Usage Example of GPT-4

- Language translation: GPT-4’s ability to understand and generate natural language text could make it useful for machine translation applications. It could be trained on a large dataset of translated texts to improve its accuracy and fluency.

- Text Summarization: GPT-4’s ability to generate human-like text could be useful for tasks such as text summarization, where the output text needs to be easy to understand and read.

- Question Answering: GPT-4 is capable of answering questions and providing detailed explanations, which could be useful for applications such as customer service or technical support.

- Image and video generation: GPT-4 is built on the Transformer architecture, which has been shown to be effective for a variety of machine learning tasks, including computer vision. This means that GPT-4 could potentially be used for tasks such as image and video generation.

- Other applications: GPT-4’s versatility and adaptability make it a promising tool for a wide range of natural language processing tasks. It could be used in areas such as chatbots, automated news writing, and even creative writing.

Wrapping Up

Based on the progression of the GPT series, it is safe to assume that GPT-4 will have even more parameters and improved capabilities compared to GPT-3. With the potential for even more accurate and diverse responses, GPT-4 could be a game-changer in the field of natural language processing. Overall, GPT-4 from OpenAI is expected to have a significant impact on the world, paving the way for a wide range of exciting innovations and creations. However, In a recent interview with strictlyVC, Open AI CEO, Sam Altman was asked if GPT-4 will come out in the first quarter or half of the year, as many expect. He responded by offering no certain timeframe. “It’ll come out at some point when we are confident we can do it safely and responsibly,” he said. So only time will tell.

Samiul Haque’s fascination with sci-fi movies and their high-tech gadgets led him to become a dedicated content creator. As smart home devices gained popularity, he now reviews everything from smart speakers and appliances to the latest smartphones and smartwatches, sharing his insights.