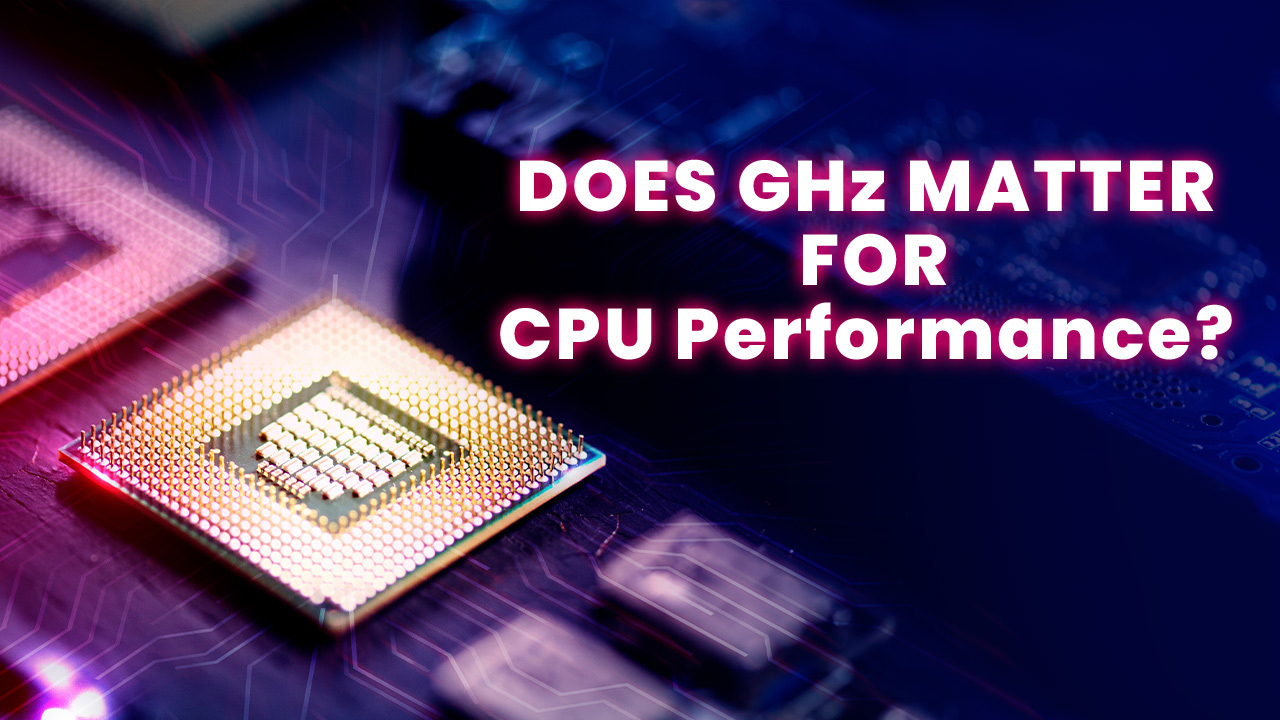

For years, GHz (gigahertz) has been used as an easy way to compare the performance of CPUs (central processing units). The higher the GHz, the faster the CPU, right? Or in another way, does GHz matter in CPU? Well, not exactly. While GHz does matter, it’s far from the only factor that determines real-world CPU performance. There are many other architectural factors at play.

While GHz provides a baseline indication of CPU speed, real-world performance depends on many other aspects like instructions per clock (IPC), core counts, caching, branch prediction, and physical layout. Extensive benchmarking across diverse workloads is required to accurately compare different CPUs.

In this beginner’s guide, we’ll take a deeper look at why GHz ratings can be misleading. We’ll break down what goes on “under the hood” in modern CPU design and how that impacts performance.

What Is CPU Clock Speed Exactly?

First, let’s demystify what CPU clock speed measures.

The “clock” refers to the pulse that synchronizes all the components in a CPU. Like the ticking of a clock, this pulse occurs at a set frequency. For example, a 1.2 GHz CPU pulses 1.2 billion times per second.

Each pulse allows the CPU to execute a cycle where it processes instructions. So a higher gigahertz rating means more cycles per second.

That sounds simple enough. More cycles equals more work done, right?

Well, keep in mind that not all cycles are created equal. The amount of work done per cycle depends on other aspects of the CPU.

Why Raw GHz Ratings Can Be Misleading

Back in the early 2000s, clock speed was a useful metric. Early single-core CPUs from AMD and Intel were similar in design. The gigahertz measurement provided a rough estimate of performance between models.

At the time, AMD and Intel were locked in a “megahertz war” trying to push clock speeds higher each generation. This period of close competition led to rapid advances in GHz. Learn more about the history of AMD vs Intel here.

But modern CPUs are extremely complex. Small architectural differences can have a huge impact on real-world speed despite the same GHz.

Let’s walk through some examples that demonstrate why using GHz alone to compare performance can be misleading:

Instructions Per Clock (IPC)

This measures how many instructions a CPU processes per cycle. Thanks to optimizations like pipelining, out-of-order execution, and superscalar execution, modern CPUs can work on multiple instructions simultaneously during one clock pulse.

So a 3 GHz CPU with higher instructions per clock rating (IPC) can get more work done compared to a 4 GHz CPU with a lower IPC.

As an example, AMD’s Zen 3 cores have around a 19% IPC advantage over their previous Zen 2 cores. This allows a Zen 3 chip like the 5800X3D to outperform a higher GHz Zen 2 like the 3950X in many tasks.

Cores and Threads

Having more cores allows a CPU to process multiple tasks simultaneously. This improves performance for multi-threaded workloads like video editing, 3D rendering, and programming compilers.

Most consumer CPUs today have between 4 to 16 cores. While servers can have as many as 64 cores!

In addition, technologies like Intel’s HyperThreading and AMD’s Simultaneous MultiThreading (SMT) allow splitting each physical core into two virtual threads. This can further boost multi-tasking efficiency.

So higher core and thread counts can significantly impact real-world speed despite a lower gigahertz rating. An 8-core Ryzen chip can easily outpace a 4-core Intel chip with a 1 GHz higher clock speed.

However, more cores only help up to a point depending on the application. Check out our guide on how to find the optimal core count for your specific workload.

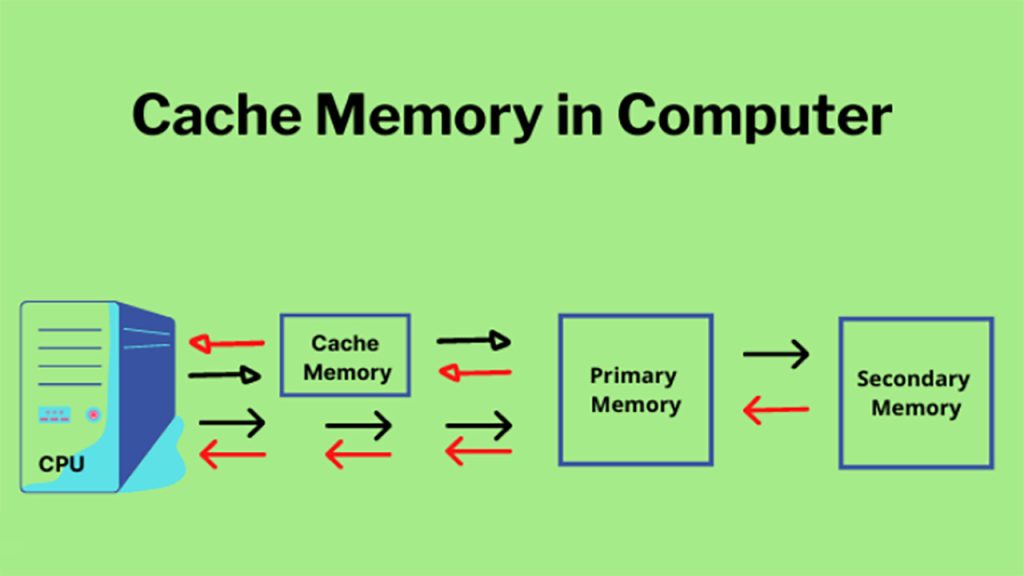

Cache Memory

Cache refers to small amounts of fast memory built right onto the CPU itself. Typical cache sizes range from a few MB up to around 30MB for high-end chips.

Having more cache available allows a CPU to access data much faster compared to reading from slower DRAM system memory. Some tasks see huge performance gains with a larger cache.

So optimizations like adding more cache levels and increasing cache size per core are easy ways to boost real-world CPU speed without pushing GHz.

Memory Support

The memory standards that a CPU supports can also impact performance. Support for faster RAM through standards like DDR4-3600 and DDR5-5200 allows the CPU to access data quicker compared to older DDR3 memory.

Higher memory bandwidth from technologies like quad-channel DDR4 can further remove bottlenecks, especially for memory-intensive workloads.

Branch Prediction

Modern CPUs utilize branch prediction systems to guess which code path a program will take when it hits a conditional branch instruction like “IF x then y”. If guessed correctly, the CPU avoids stalls and can execute speculatively down the predicted path.

More advanced branch predictors guessed correctly more often. This reduces wasted cycles from mispredictions and improves real-world performance.

Physical Layout

Even the physical layout and proximity of components on the silicon die play a role. Placing components closer together reduces the physical distance signals have to travel. This can provide small improvements in latency, power efficiency, and speed.

As you can see, the CPU architecture and design is complex! There are many nuances beyond just GHz that impact real-world speed.

Real-World Performance Comparisons

Let’s look at some real-world examples that demonstrate why raw GHz ratings fail to tell the whole story:

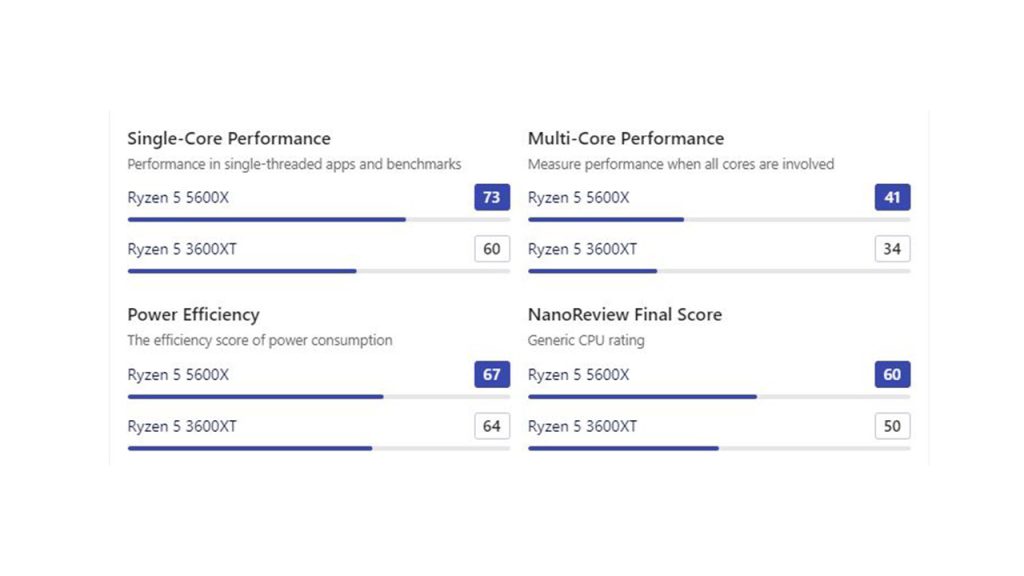

AMD Ryzen 3600XT vs 5600X

The Ryzen 5 3600XT has a higher boost clock of up to 4.5GHz compared to the Ryzen 5 5600X which boosts to 4.6GHz. For more, check the full comparison.

Based on GHz alone, the 3600XT should be slightly faster in lightly threaded work. However, thanks to a redesigned Zen 3 core with 19% higher IPC, the 5600X is around 15-20% faster in single and multi-core workloads despite the lower frequency.

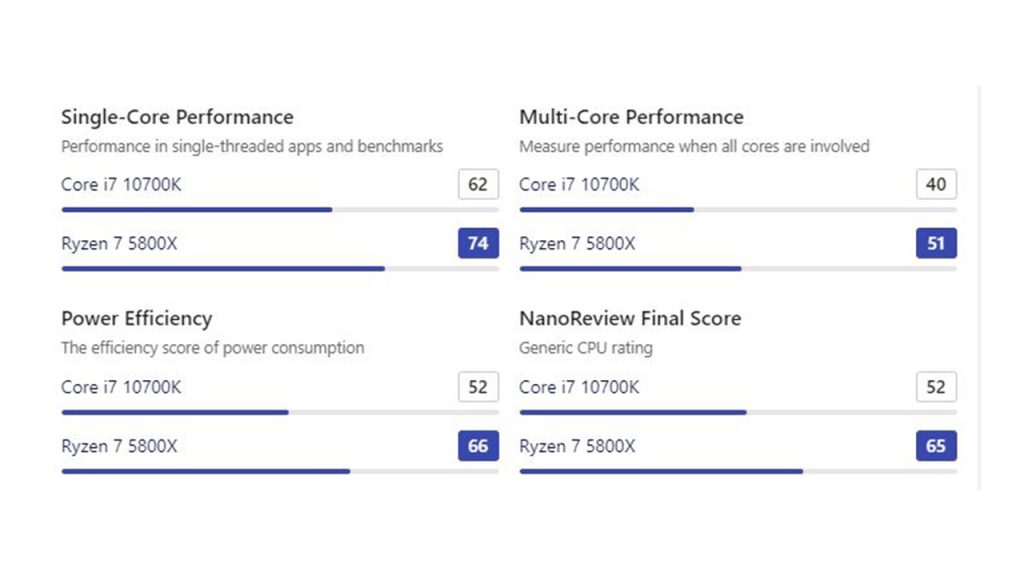

Intel Core i7-10700K vs AMD Ryzen 7 5800X

The Intel chip has a higher 125W TDP and can sustain all-core boosts up to 4.8GHz. Meanwhile, the 105W 5800X tops out at around 4.7GHz all-core.

But again, thanks to the architectural improvements in Zen 3 granting it a double-digit IPC lead, the 5800X still manages to edge out Intel in both single and multi-threaded tasks, despite the lower GHz. This shows how architectural design can outweigh small GHz differences. Check out the full comparison here.

For a great mid-range CPU battle illustrating this, check out the Ryzen 7 7800x 3D vs Core i5 13600k. The extra 3D V-Cache on the 7800x 3D helps it compete closely with Intel’s newer Raptor Lake architecture, despite a lower boost clock.

Apple M1 vs Intel Mac Chips

The Apple M1 utilized in the latest MacBooks has a maximum clock of just 3.2GHz. However, it delivers better single and multi-core performance than many Intel chips with much higher GHz thanks to excellent IPC and efficiency.

For example, the 4.5GHz Intel Core i9-10900K still lags behind the 3.2GHz M1 in both single and multi-core workloads.

Game Performance

Does CPU GHz Matter for Gaming? In real-world gaming, a higher clock speed doesn’t always translate to faster frame rates.

For example, while the Ryzen 5 5600X is around 5% faster on average FPS compared to the 3600XT, in CPU-bound games like CS: GO (Counter-Strike: Global Offensive), the difference is much more substantial thanks to IPC gains.

This shows how IPC and architecture can outweigh GHz differences even for gaming.

For a relevant example, check out the gaming performance between the Intel 13700K vs 13900K. Despite a 600MHz higher boost clock, the 13900K only provides a few extra FPS over the 13700K in many titles.

As you can see from these examples, the GHz number simply does not tell you the full story when comparing performance across different CPU architectures.

The Pitfalls of Using GHz Alone

Hopefully, this helps explain why GHz ratings alone should never be used to compare performance between different processors.

The complexities of modern CPU design mean there are many other factors at play.

Some key pitfalls of relying only on GHz include:

- Ignoring IPC gains from newer architectures

- Not accounting for differences in core counts

- Failing to consider caching implementations

- Neglecting memory support differences

- Disregarding branch predictor and physical layout changes

While GHz can be useful for gauging speed within the same family of CPUs, it does not allow accurate comparisons across different brands and architectures.

The Need for Real-World Benchmarking

So does GHz matter for performance? Yes, but it’s only one piece of the puzzle.

To get a true sense of real-world speed, there is no replacement for extensive benchmarking and reviews across a wide variety of programs and usage scenarios.

Reputable reviewers utilize standardized tests like Cinebench, Geekbench, Handbrake, Blender, gaming benchmarks, latency measurements, power efficiency testing, and much more.

It is only by looking at performance across a broad set of synthetic and real-world benchmarks that you can accurately compare the true speed between different CPUs.

Pay close attention to IPC improvements, caching implementations, core counts, memory support, power efficiency, and other architectural enhancements that manufacturers highlight in their marketing. These often have a bigger impact on real-world speed compared to small GHz differences.

So next time you’re researching CPUs, take GHz measurements with a grain of salt. Focus on real-world benchmark data, reviews from reputable sources, and the holistic architecture changes that generation-over-generation improvements provide.

Let me know if you have any other CPU performance questions! This is a complex topic, but I hope this article has helped explain what goes on under the hood and why GHz alone does not determine real-world CPU speed!

FAQ – Common CPU GHz Questions

Below are answers to some frequently asked questions about CPU GHz and performance:

How many GHz is a good CPU?

There is no definitive GHz that guarantees a “good” CPU. A 3 GHz CPU from 10 years ago will be much slower than a modern 3 GHz CPU. GHz alone does not determine real-world speed – factors like IPC, cores, and architecture matter more. For modern CPUs, good performance can range from base clocks of 2.5 GHz to 5 GHz depending on workload.

Which is faster – 3.2 GHz or 2.8 GHz?

It’s impossible to say from the GHz alone. A 3.2 GHz CPU from 5 years ago may be much slower than a brand-new 2.8 GHz CPU. Real-world benchmarks are needed to compare performance between different architectures and generations.

How important is CPU GHz for gaming?

For gaming, achieving higher FPS depends more on the GPU. An older 4 GHz CPU paired with a better GPU will typically outperform a newer 6 GHz CPU with a weaker GPU. However, very low GHz can still bottleneck performance. Something in the 2-4 GHz range is fine for gaming.

Is 2.6 GHz fast?

For a modern CPU, 2.6 GHz is on the lower end but can still be “fast” enough depending on workload. An 11th gen Intel i5 at 2.6 GHz can handle most general computing and gaming needs fine. But for heavy workloads, higher GHz and core counts would be recommended.

What GHz should my CPU be at?

This depends entirely on your CPU model and intended use case. For modern CPUs, base clocks typically range from about 2.5 GHz to 4 GHz for mainstream models. High-end chips may boost over 5 GHz. Compare your clocks to your specific CPU’s rated base and boost frequencies.

What are base frequency and boost frequency?

The base frequency is the guaranteed constant clock speed a CPU will run at under load. Boost frequency is a higher speed the CPU can reach temporarily in short bursts when conditions allow (e.g. thermals, workload). So actual clocks fluctuate between base and boost.

Does overclocking increase GHz?

Yes, overclocking is manually raising the CPUs clocks above stock frequencies, essentially “forcing” a CPU to run faster. However, overclocking increases power consumption, and heat output, and may impact longevity if not done properly. Most modern CPUs already turbo boost fairly high out of the box.

Arafat is a tech aficionado with a passion for all things technology, AI, and gadgets. With expertise in tech and how-to guides, he explores the digital world’s complexities. Beyond tech, he finds solace in music and photography, blending creativity with his tech-savvy pursuits.